How Cameras work

Let’s look briefly at how digital cameras work. Here’s an overly simplified overview:

-

Light enters through the lens, and is recorded by the sensor. Photo diodes on the sensor create an electrical signal, which is converted into a digital value by the processor, and then stored in the buffer, then written to a memory card.

Sensor Size

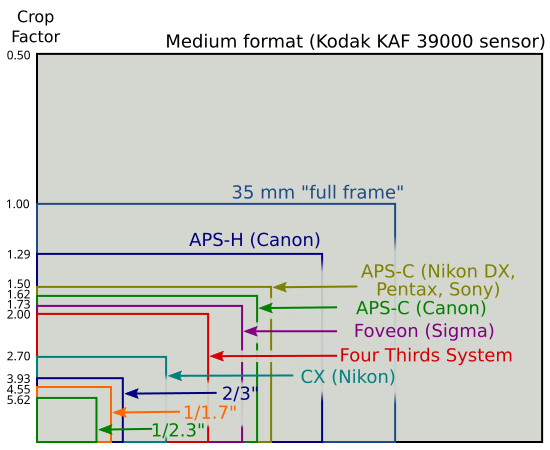

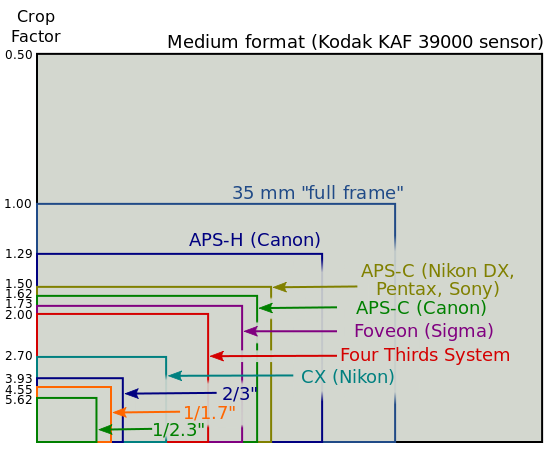

A sensor is the silicon chip inside your camera that converts photos of light coming from your lens into voltages. The larger the sensor, the more light that can be collected to crease your image. Let’s look at the approximate horizontal sensor width of various sensors (some models were “rounded” into the nearest category):

-

Compact camera 5.8mm (canon a570)

-

Advanced compact 7.6mm (Fuji f30, f50, canon g9/g10, Ricoh gx100, Olympus 5050/7070, Oly sp350, Nikon p6000, fuji e900)

-

High-end compact 8.8mm (Olympus 8080, Panasonic LX3)

-

Micro-four thirds camera 17mm

-

Olympus dSLR 17mm

-

Sony Nex-5 24mm

-

Nikon D300 24mm

-

Full-frame (Nikon D3, canon5D) 35mm

Here is a diagram showing camera sensor sizes.

Sensor types – CCD vs CMOS

CCD (Charged Couple Device) and CMOS (Complimetary Metal Oxide Semiconductor) are two common types of sensors found in cameras. Most recent dSLR cameras use CMOS sensors.

CMOS sensors have tiny transitors associated with each pixel, and each pixel is read individually. In a CCD sensor the data is transferred across the pixel array, and converted to an analog signal in an output node separate from the pixels. CMOS sensors require much less power. Because the CCD lacks transistors on the pixels, the CCD pixel is more sensitive to light, which can theoretically lead to less noise.

Photosite (photosensor, photodiode)

The photosite, also known as a photodiode, is an area on the camera sensor that captures light and converts it into a signal. Photosites are actually dedicated to either red, blue, or green – and cameras have internal algorithms to interpolate accurate RGB color values for each pixel.

Pixels

Pixels are the building blocks of an image. Pixel comes from the phrase “Picture element”. When light hits the photosensor of a camera, signals are converted into value for each pixel. In a JPEG file, each pixel actually has 3 values, ranging from 0-255, for red, green and blue, known as a RGB value. A photosite (either red, green, or blue) is usually mapped to a pixel (which has a RGB value). Interpolation from neighboring photosites are used to compute the pixel RGB value.

The term pixel and photosite are sometimes interchanged. I like to think of a photosite as the physical device on the sensor exposed to the light, and the pixel is in my image.

Resolution

The resolution of an image is the number of pixels per inch (dots per inch, DPI). 72 DPI is sufficient for viewing on a monitor, but 300 per inch is required for high-quality prints. Based on the pixel count of a sensor (e.g. – 3872 pixels across on a D300 sensor), you can calculate the theoretical maximum print size – 14 inches across for a photo from the D300. In reality, when the pixel data is considered high quality, the print can go much larger. Trying to cram too many pixels on a camera sensor will result in more noise, and lower quality pixel data. This is why you can print a 12-megapixel photo from a dSLR larger than a 12-megapixel photo from a compact camera, the dSLR sensor is larger, therefore the photosensors that generate the pixel data are larger, better quality, and have less noise.

Photosensors range in size from 2-8 microns. Bigger is better, so check out your camera to see how big your photosensors are. Larger photosensors can collect more light.

Interpolation

Some compact cameras do not have enough photosensors to justify the resolution of the images they create. They use interpolation to guess at pixel values. This is why some resolution numbers on small sensors must be met with suspicion, and can’t be printed as large as higher quality cameras. For example (not to pick on Fuji), some Fuji cameras suddenly “increased” in resolution from 6 to 12 megapixels.

Bit-depth

The bit-depth is the number of bits of data that each pixel holds. 8 bits corresponds to 256 different tonal values. 12-bit raw files in a dSLR has a bit depth of 4,096 tones, while a 14-bit raw file has a bit depth of 16,384 tones. Shooting in 14-bit mode, when available can show slightly more detail in dark shadows and highlights, although the differences are sometimes hard to see. The raw files will be 20% larger.

Dynamic Range

I want to start off with some definitions. The dynamic range of a photo is defined as the ratio between the darkest and lightest parts of the photo. The dynamic range of a camera is the largest dynamic range that be captured by the camera sensor in a raw file. The dynamic range in a JPEG file will be smaller unless it is processed in a RAW editor.

Dynamic range can be expressed in terms of stops (e.g. – 10 stops), or as a ratio (1:1,000). to convert stops to a ratio, raise 2 to the power of the stop. E.g. – a 6-stop dynamic range = 2^6 = 1:64.

Lets look at some common dynamic ranges:

-

human eye 1:10,000 13-14 stops

-

outdoor sunlit scent 1:1000 10-11 stops

-

dSLR camera 1:512 9 stops

-

compact camera 1:256 8 stops

-

color film 1:256 8 stops

-

printed image, glossy paper 1:128 7 stops

-

printed image, matte paper 1:32 5 stops

-

indoor scene 1:64 6 stops

As you can see, the human eye is capable of seeing much more dynamic range than your camera can capture. This problem has plagued photographers forever. Your challenge as a photographer is to manage the dynamic range of what you are shooting.

For maximum dynamic range

-

Shoot at base ISO. Dynamic range is decreased at higher ISO’s

-

Use a camera with a larger sensor. Full-frame cameras have the largest dynamic range.

-

Watch your exposures – decide ahead of time whether you want to sacrifice shadows, highlights or a little of both

Sensor noise

Noise is the result of random inaccuracies that occur when light hits the photo sensor and is converted to a signal. Since putting your camera on a higher ISO setting is simply amplifying this signal, the noise is also amplified. A more meaningful measure of noise is the signal-to-noise ratio. A low SNR means the noise is less noticeable in the image. This is why noise is worse in the shadow areas, there is less signal in the SNR formula. This is also the basis of the rule “expose” to the right; areas of high exposure will have a lower SNR than darker areas, even when the exposure is brought back down. Since most UW photography is done at low ISO or base ISO, noise is normally not a considering in underwater photography, with some exceptions (e.g. – low light wreck photography). Books on advanced digital photography will go into noise in much more detail.

Some technical differences between compact cameras and dSLRS

Compact cameras have smaller sensors than cropped sensor dSLRs. Canon (1.3x – 1.6x) and Nikon (1.5x) sensors are larger than Olympus sensors (2.0x crop). Full-frame dSLR’s have the largest sensors.

Because of their smaller sensors, compact cameras have a larger depth of field than dSLR’s at the same aperture. A macro photography taken with a point and shoot at F8 will have considerably more depth of field than a dSLR photo shot at F8

Noise at higher ISO’s will be considerable higher on a compact camera. In general, the larger the sensor, the less noise given the same number of megapixels.

With bright light or powerful strobes, optimal shutter speeds, apertures and ISO’s can often be selected. However, underwater, light is often at a premium, and tradeoffs must be made between the three.